Moonlight Gaming

This setup is a LAN-based cloud gaming environment built from three gaming PC hosts. The systems run headless with no keyboard, mouse or monitor. Each system connects only to power, Ethernet, and a dummy HDMI/DisplayPort adapter to enable GPU output.

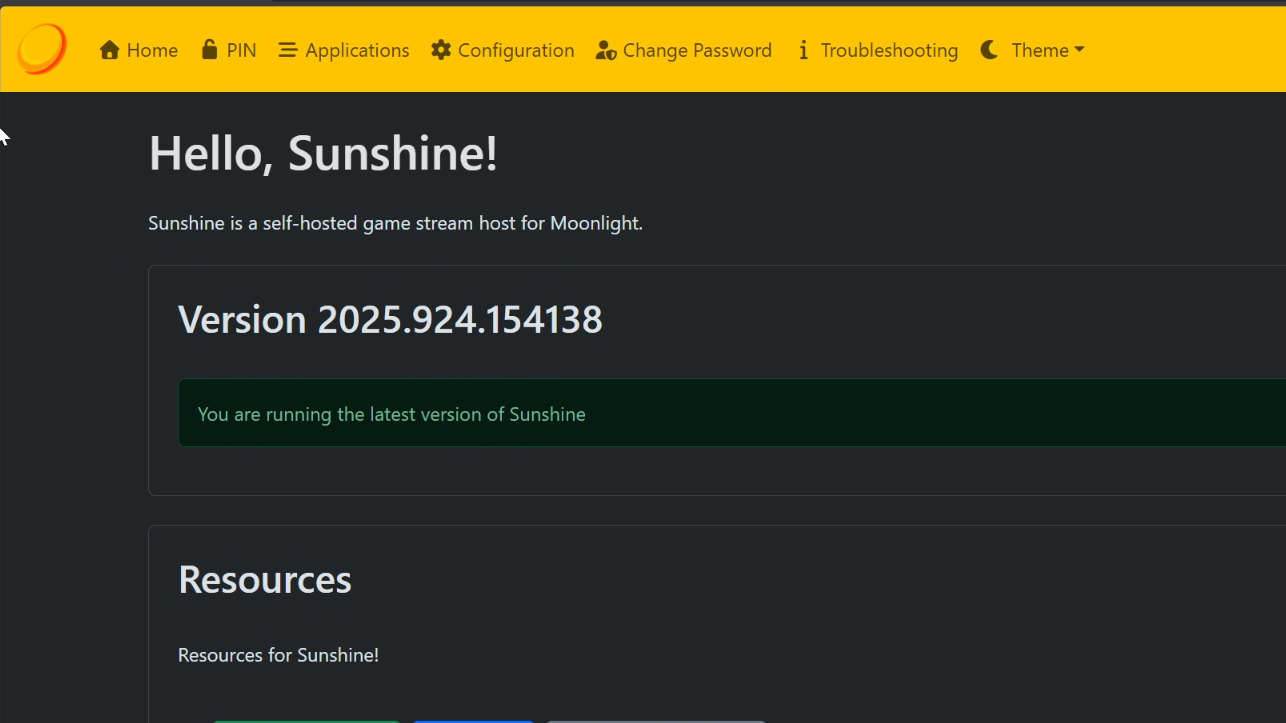

Games run locally on the host machines and stream over the network using Sunshine and Moonlight. Devices around the house connect to the hosts and play games remotely, allowing my family to play games anywhere in the house.

Hosting Architecture

Three dedicated gaming PCs provide the resources for the platform.

Moonlight 56

Ryzen 5 5600G + 32GB + RTX 3070

Moonlight

Ryzen 7 5700X + 32GB + RTX 3070

Moonlight XL

Ryzen 9 9900X + 64GB + RTX 5070 Ti

Each machine runs locally installed games while Sunshine handles video encoding and streaming.

The hosts remain powered on and accessible across the LAN so different family members can connect and stream games to any device on the network.

Headless Infrastructure

Running the hosts without local displays or peripherals allows them to behave more like infrastructure nodes than traditional gaming PCs.

This design allows the system to deliver high-quality video streams with minimal delay across the house.

Low Latency Local Routing

Streaming traffic remains entirely inside the LAN. Game frames are encoded on the host and delivered directly to client devices without leaving the local network.

Network Architecture

Because Moonlight streaming is sensitive to both latency and throughput, the internal network was upgraded to support 2.5Gbps connectivity between gaming hosts and the core network infrastructure.

The design focuses on minimizing network bottlenecks and jitter during gameplay streaming.

Lessons Learned:

The system originally began as an attempt to run Moonlight gaming hosts inside my Proxmox virtualization cluster using GPU passthrough.

Although the approach worked technically I had some issues with maintenance.

Network Bridge Complexity

Running the gaming hosts inside VMs meant network traffic passed through additional virtual networking layers. Moving the systems to dedicated hardware prevented me from rebuilding the virtual bridge every time I changed hardware.

GPU sharing

Dedicating a GPU to passthrough prevented me from using it in other workloads. It also added a lot of complexity and I had to rewrite device blacklists when I made a change to servers